Dealing with Software Robots

Don't Agree? You are a Bot!

- von Birte Vierjahn

- 07.07.2021

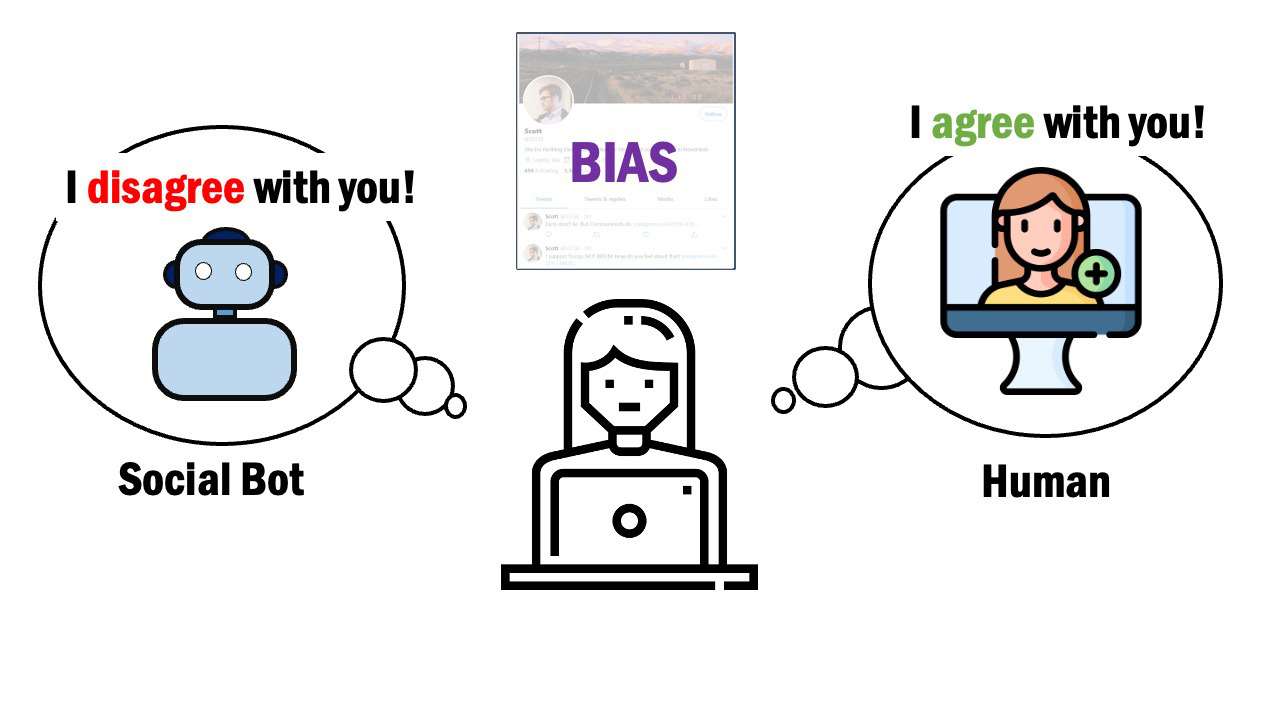

Is it a human being writing here or is artificial intelligence behind it? Anyone who surfs Twitter, Instagram or TikTok has – consciously or not – already encountered social bots: Automated profiles that comment, like or write messages. Social psychologists at the UDE have conducted several studies to investigate how we deal with them. Do we recognize artificial intelligence? Do we accept it? That depends on the topic – and on the bot's opinion.

Bots in general are quite useful: they remind us to drink or provide weather updates. But social bots are also used to support certain opinions on the web or even to intentionally spread fake news.

In a series of studies, UDE’s social psychologists investigated whether we recognize manipulative social bots, at which point we become suspicious, and in what case we even check whether a profile belongs to a real person: For the three surveys conducted online, a total of about 600 participants from the U.S. looked at various Twitter profiles. These profiles were either heavily favoring the Republicans or Democrats, and furthermore, had varying degrees of individual or automated design.

"Due to the political system in the U.S., we could assume that participants would clearly favor either one party or the other", explains PhD student and one of the study leaders Magdalena Wischnewski. "There are few other aspects of life with such a clear dichotomy."

It appeared that the participants became suspicious if the profiles had a very automated character. However, this was more likely to be tolerated by the test persons if the respective account displayed the same political opinion as they did. The opposite was true for contrary positions: Those who support the Democratic U.S. president tended to be suspicious of Republican content – and vice versa. The participants mostly used software to identify bots in order to confirm an opinion they had already formed.

"The results apply both geographically to other countries and in terms of content to all aspects of life, where there are different positions", Wischnewski says. "For us to interact with social bots, they have to be human-like and they must share our opinions. But there are very few of those. It is therefore unlikely that many users will fall for bots in direct contact. In other words, social bots rather serve to pick up topics so often that it seems as if they are trending."

* „Disagree? You Must be a Bot! How Beliefs Shape Twitter Profile Perceptions”

Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems,

https://doi.org/10.1145/3411764.3445109

Further Information:

Setup (Open Access): https://osf.io/36mkw/

Magdalena Wischnewski, Social Psychology, +49 203/37 9-2442, magdalena.wischnewski@uni-due.de

Editor: Birte Vierjahn, +49 203/37 9-2427, birte.vierjahn@uni-due.de